Cognitive Memory Tree, a new memory paradigm built for agent: The Shift to Lossless, Infinite-Context, Fast, Token-Efficient Memory (CoMeT Agent Memory Protocol)

Why every long-running agent hits a wall at turn 50 — and how we stopped feeding ours garbage.

The Problem: The "Agent Memory Crisis"

Current AI Agents are trapped in a "Bloat Loop." To make an agent "remember," developers either cram 50+ turns into a noisy tool log and context window or use flat RAG that misses the point. Well-polished markdown notes and wiki are powerful, but LLM has no sufficient context window for that.

The Tool Bloat: Feeding massive, unparsed tool inputs/outputs into the context window creates a "garbage in, garbage out" loop. When the model has to swim through thousands of tokens of tool logs just to find simple task, reasoning falls apart.

The Context Collapse, NOT engineering: We’re struggling to engineer a memory paradigm that survives the noise. Instead of "great" context, we get a fragmented mess where the mission is buried under system chatter, leading to high latency and even higher hallucinations.

The Session Silo: Once a chat ends, the memory dies. Your agent has to re-learn your project, style, and goals every single time.

The Token Tax: Every tool log and raw search result is dumped into the prompt. This noise kills reasoning, spikes costs, and leads to "Agent Dementia"—where the model forgets the mission while staring at the logs.

The Similarity Trap: Traditional RAG retrieves what is mathematically similar, not what is logically relevant. It lacks the "narrative" of your work.

The Solution: The 3-Part Lossless, Fast, Token-Efficient Memory Protocol

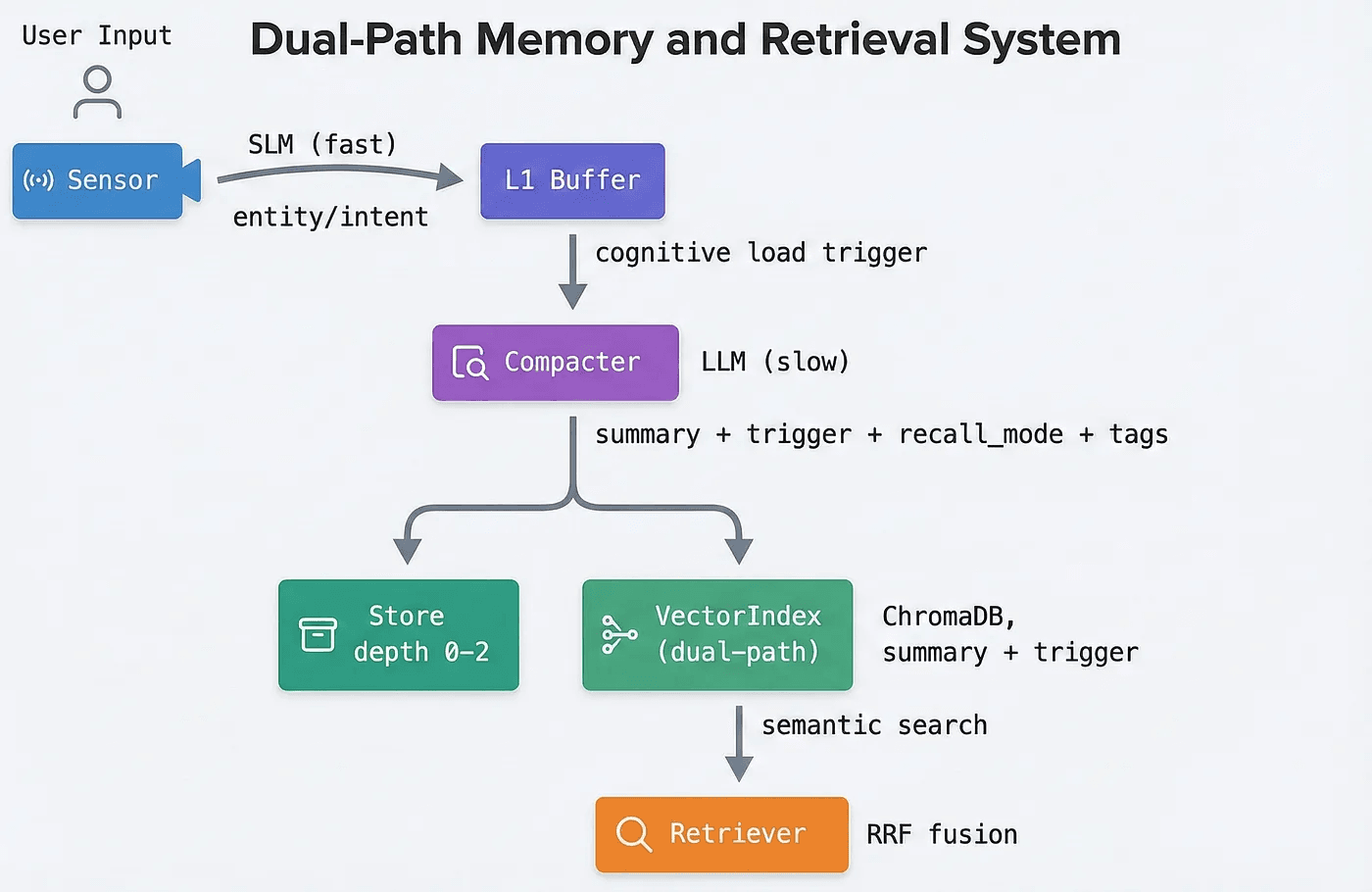

We are introducing a new paradigm of agent memory that is Lossless, fast, and token-efficient. It works like a human brain, using a Dual-Speed Layer to manage information:

The Philosophy We replace bloated context windows with a compacted, hybrid (index/graph) memory database, and the memory paradigm with fast and agentic tool calling. The active context window stays fresh, holding only the last 2-3 turns with compacted memory(id, summary, and trigger), and memory is recalled by its tool. When a task requires historical context, or details, the agent dynamically recalls only the necessary resolution of memory (summary, detailed, or raw). It’s fully compatible with current agent systems, but as a drastically more personalized and focused memory engine.

The Sensor (SLM): "When to Remember" A lightweight model that acts as the trigger. It continuously monitors session chats, web browsing history, and tool calls, making real-time decisions on when an interaction holds enough value to be saved.

The Compactor (SLM): "What to Remember" Once triggered by the Sensor, this model distills and structures the data into Chroma DB. It saves memory across five distinct layers so the agent can pull exactly the resolution it needs later:

Summary: A high-level overview.

Detailed Summary: Deeper context for complex reasoning.

Trigger: Specific conditions for activating the memory.

Tags: Metadata for rapid retrieval.

Raw Source: The untouched original data (chats, logs, etc.).

Benchmarks: Solving the 5.3x Degradation Gap:

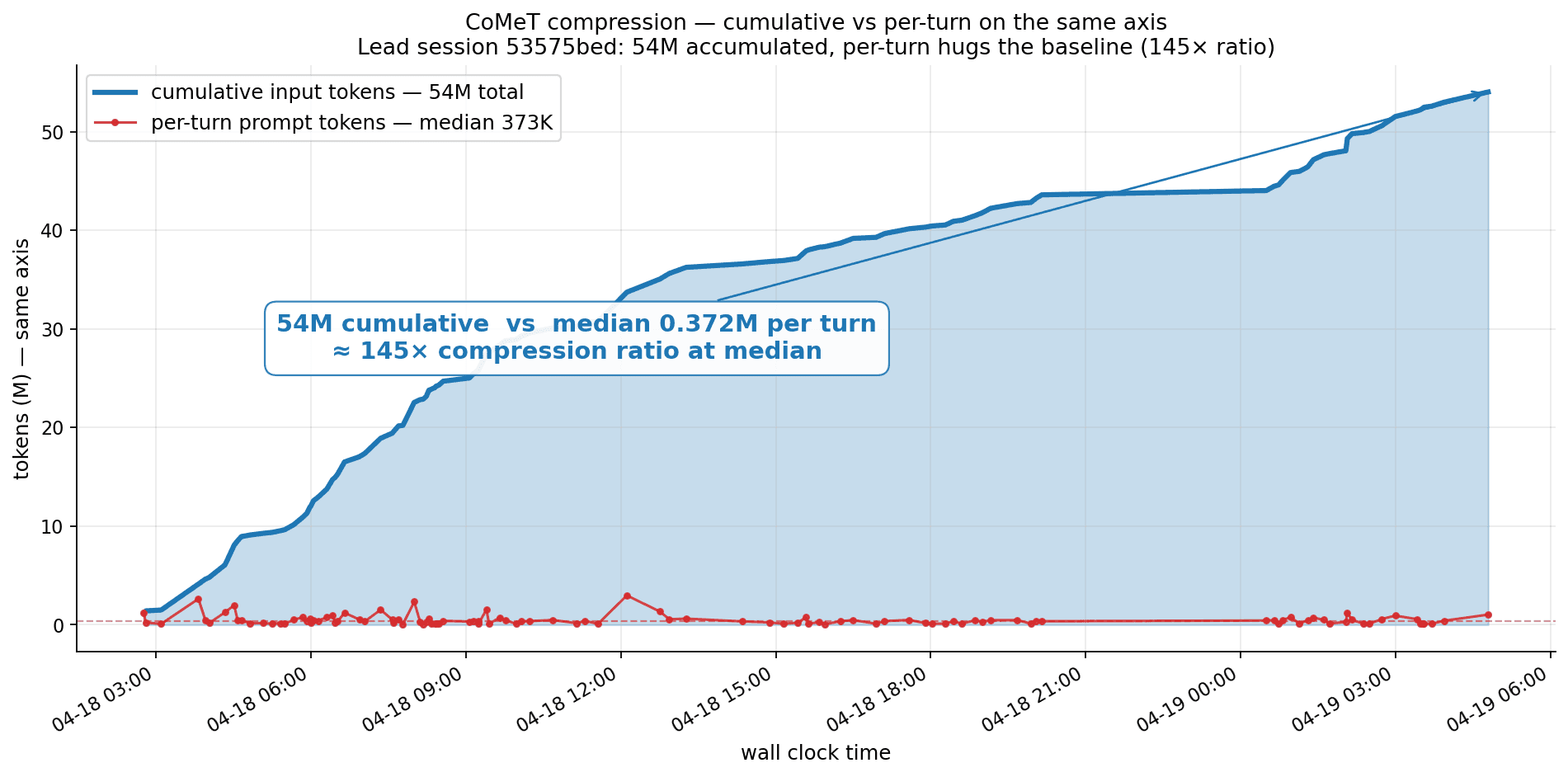

By 300+ turns, stuffing the context window severely degrades an LLM's ability to reason. CoMeT fixes this by holding the per-turn prompt at a 373K token median while the session's cumulative input climbs to 54M tokens; A 145× compression ratio sustained across the entire 27-hour trace, entirely bypassing the "lost-in-the-middle" phenomenon. Crucially, this shifts memory scaling from a computationally expensive O(n²) attention bottleneck into a highly efficient O(n) retrieval process. Instead of wasting compute filtering through raw tool logs and historical chatter, the model regains the cognitive breathing room needed to focus strictly on complex reasoning and execution. The measurement is not a synthetic benchmark; It's a live multi-agent lead session with concurrent delegation, long-running tool trajectories, and cross-session handoffs. The per-turn line hugs the baseline throughout; cumulative growth never bleeds into the working set.